Executive Summary: Why Small Language Models Are Disrupting AI

Small Language Models (SLMs) represent the most significant shift in artificial intelligence since the breakthrough of large language models like GPT-3. As organizations grapple with the computational costs, privacy concerns, and environmental impact of massive AI systems, compact yet powerful alternatives are emerging that deliver comparable performance at a fraction of the resource requirement. This comprehensive guide explores how efficient AI models are democratizing artificial intelligence, enabling on-device AI processing, and transforming business applications across industries.

What Are Small Language Models (SLMs)? Defining the New AI Paradigm

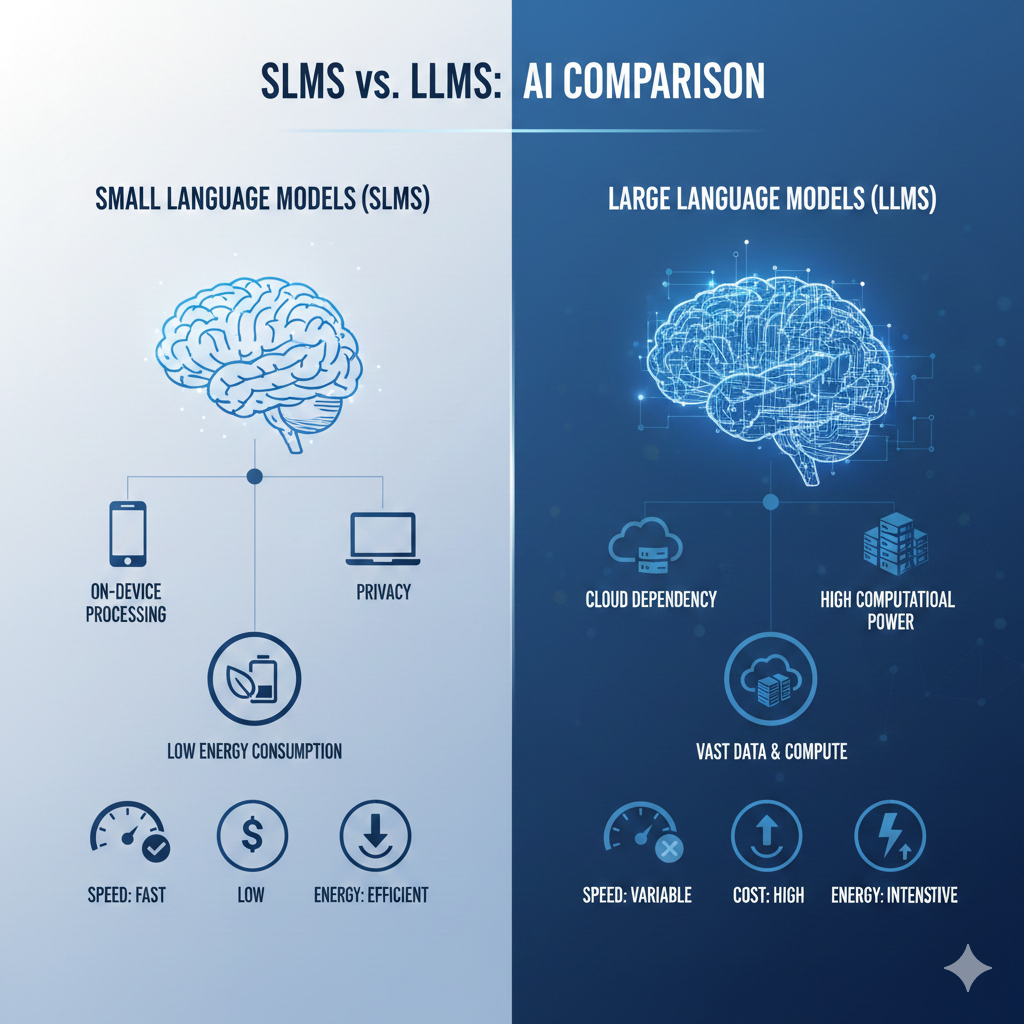

Small Language Models (SLMs) are AI systems typically ranging from 1 billion to 70 billion parameters—significantly smaller than their large language model (LLM) counterparts that often exceed 100 billion parameters. Despite their compact size, these models achieve remarkable performance through architectural innovations, better training data curation, and optimization techniques.

- Parameter efficiency: Achieving similar capabilities with fewer parameters

- Specialized domain expertise: Optimized for specific tasks rather than general knowledge

- Edge deployment capability: Ability to run on consumer hardware without cloud dependency

- Reduced computational requirements: Lower energy consumption and carbon footprint

Visual comparison of SLMs vs LLMs

The Driving Forces Behind the SLM Revolution

1. Computational Efficiency and Cost Reduction

Training and deploying massive LLMs requires extraordinary resources. Google’s PaLM 2 with 540 billion parameters reportedly cost tens of millions to train. In contrast, leading SLMs like Meta’s Llama 3.1 8B deliver excellent performance while requiring dramatically fewer computational resources.

2. On-Device Processing and Privacy Preservation

The ability to run AI models locally on devices—from smartphones to IoT sensors—addresses critical data privacy concerns and enables applications in regulated industries like healthcare and finance.

3. Environmental Sustainability

Smaller models consume less energy both during training and inference, aligning with global sustainability initiatives while maintaining competitive performance.

4. Specialization Over Generalization

Many enterprise applications do not require encyclopedia-level knowledge but instead benefit from domain-specific optimization. SLMs can be fine-tuned for particular industries—legal, medical, technical—providing superior results within their specialization.

Leading Small Language Models Transforming the Industry

Meta’s Llama Series

Llama 3.1 (8B and 70B) marked a turning point, proving smaller models could compete with much larger ones while being open-source.

Microsoft’s Phi Series

Phi-3 mini (3.8B) demonstrates how curated training data and smart architecture can outperform larger models.

Google’s Gemma

Google’s Gemma models emphasize responsible AI with strong safety tools and easy cloud integration.

Mistral AI’s Models

Mixtral 8x7B uses a mixture-of-experts architecture, activating only parts of the model per query for efficiency.

Real-World Applications of SLMs

- Enterprise chatbots and customer support

- Marketing and content generation

- Code generation and developer tools

- Mobile and edge AI applications

- Academic research and education

Step-by-step SLM deployment workflow

Technical Advantages of SLMs

- Reduced hallucination rates

- Faster inference times

- More efficient fine-tuning

- Advanced optimization techniques

Challenges and Limitations of SLMs

- Limited general knowledge

- Weaker complex reasoning

- Multimodal integration challenges

- Less advanced tool usage

The Future of SLMs

Future AI systems will combine specialized SLMs with selective access to larger models, supported by AI-optimized chips from NVIDIA, AMD, and Apple.